Every program running on your computer right now believes it has exclusive access to a large, contiguous block of memory — starting at address zero. That belief is an illusion, carefully constructed by the operating system and hardware working together. The mechanism behind it — the separation of logical and physical memory addresses — is one of the most fundamental concepts in computer science, forming the foundation of multitasking, process isolation, virtual memory, and modern operating system security. Without this abstraction, running multiple programs simultaneously would be impossible, memory protection would not exist, and a single buggy application could corrupt the entire system. Whether you are a student learning operating systems fundamentals, a developer debugging memory issues, or a systems engineer optimizing application performance, understanding the difference between logical and physical memory addresses is essential knowledge that underpins everything from how your browser renders a page to how cloud platforms isolate thousands of virtual machines on shared hardware.

Memory Addressing in Modern Operating Systems

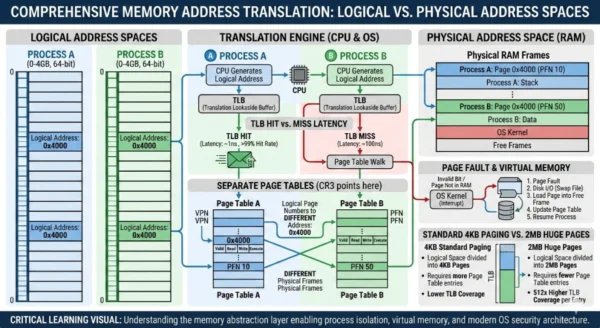

Modern operating systems manage memory through a two-layer addressing model that separates what programs see from what hardware uses. This separation solves one of the oldest problems in computing: how do you safely run multiple programs simultaneously on a single machine with limited physical memory, without any one program being able to read or corrupt another’s data? The answer is the logical-to-physical address translation system — a collaboration between the operating system software and the Memory Management Unit hardware that occurs billions of times per second on every running computer.

Logical Address: The Virtual Abstraction Layer

Definition

A logical address, also called a virtual address, is the memory address generated by the CPU during program execution. It represents the address from the process’s own perspective — a private, isolated view of memory that the program believes to be real and contiguous, starting from zero. Logical addresses do not correspond directly to physical locations in RAM. They are abstract references that the operating system and Memory Management Unit translate into actual physical addresses before any hardware memory access occurs. This abstraction is the foundation of modern multitasking: every process receives its own complete logical address space, completely independent from all other processes running on the same machine, regardless of how physical memory is actually allocated.

Advantages

- Process isolation: Each process operates in its own independent address space, preventing one program from reading or corrupting another’s memory

- Simplified programming: Developers write code using consistent addresses starting from zero without knowledge of physical memory layout or other processes

- Virtual memory enablement: Logical addresses can reference more memory than physically exists, allowing programs to use disk storage as extended RAM transparently

- Memory protection: Operating system enforces read, write, and execute permissions at the page level through logical address space management

- Flexible allocation: Physical memory can be assigned non-contiguously while the program sees a clean contiguous logical address space

- Relocation transparency: Programs can be moved to different physical memory locations without modifying any code or pointers inside the program itself

Disadvantages

- Translation overhead: Every memory access requires MMU translation from logical to physical, introducing latency that TLB caching mitigates but cannot fully eliminate

- TLB misses: Cache misses in the Translation Lookaside Buffer force full page table walks, causing 10–15ms latency spikes in workloads with large or fragmented access patterns

- Page table memory cost: Maintaining page tables for each process consumes physical memory, scaling with the number of active processes and their address space sizes

- Fragmentation: Internal fragmentation occurs when process memory needs do not align perfectly with fixed page sizes, wasting a portion of each allocated page

- Thrashing risk: When the working set of active pages exceeds available physical RAM, the system degrades severely as pages are continuously swapped to disk

- Debugging complexity: Memory addresses visible in debuggers are logical addresses, requiring additional steps to correlate with physical locations during low-level troubleshooting

Logical Address Space Components:

Address Space: The complete range of logical addresses a process can generate, starting at zero and extending to the maximum addressable size defined by the architecture. Page Number: The upper bits of a logical address identifying which page within the process address space is being referenced. Furthermore, Page Offset: The lower bits identifying the specific byte location within the referenced page, passed unchanged through the MMU translation. Additionally, Virtual Memory Regions: Distinct sections of logical address space including code, heap, stack, and memory-mapped files each with their own permissions. Moreover, Address Binding: The mechanism by which symbolic program references are associated with logical addresses — occurring at compile time, load time, or execution time.

Physical Address: The Hardware Reality

Definition

A physical address is the actual location of data in the computer’s RAM hardware — the real, unique identifier of every byte of memory installed in the machine. Unlike logical addresses, which are per-process abstractions managed by software, physical addresses are fixed hardware locations that correspond directly to rows and columns of memory cells in DRAM modules. User programs never directly see or manipulate physical addresses. All program memory accesses go through the logical address layer, which the MMU translates to physical addresses transparently at hardware speed. The operating system kernel manages physical address space allocation — assigning physical memory frames to processes, reclaiming them when processes terminate, and managing the page tables that define the logical-to-physical mapping for every running process.

Advantages

- Direct hardware access: Physical addresses map directly to memory hardware with no translation latency for kernel-level operations requiring raw speed

- Uniqueness guarantee: Every physical address is globally unique within the system, eliminating any ambiguity about which memory location is being referenced

- DMA operations: Direct Memory Access controllers use physical addresses to transfer data between devices and RAM without CPU involvement for maximum throughput

- Hardware debugging: Embedded systems and kernel developers use physical addresses to inspect and modify memory directly during low-level hardware bring-up

- Memory-mapped I/O: Hardware registers exposed through physical address space enable direct device communication for drivers and firmware

- Predictable layout: Physical memory layout follows a fixed, known structure defined by hardware, making it reliable for system-level operations requiring precise memory control

Disadvantages

- Hard size limit: Physical address space is bounded absolutely by installed RAM — programs cannot use more memory than physically exists without virtual memory abstraction

- Security risk: Direct access to physical addresses bypasses all OS protections, enabling programs to read or corrupt any memory location including kernel data structures

- No isolation: Without the logical address layer, processes share a single flat address space making it impossible to prevent one program from accessing another’s data

- Fragmentation challenges: External fragmentation in physical memory occurs when free memory exists but not in contiguous blocks large enough to satisfy allocation requests

- Relocation impossibility: Programs bound directly to physical addresses cannot be moved without rewriting all internal references — making dynamic memory management infeasible

- Complexity for programmers: Requiring applications to manage physical addresses directly would demand awareness of hardware layout, other processes, and OS memory usage at all times

Physical Address Space Components:

RAM Frames: Fixed-size blocks of physical memory, equal in size to logical pages, into which the OS loads active process pages for execution. Kernel Space: Reserved physical memory region containing operating system code, data structures, and page tables inaccessible to user processes. Furthermore, User Space Frames: Physical memory frames dynamically allocated by the OS to process pages as needed during execution. Additionally, Memory-Mapped I/O: Physical address ranges mapped to hardware device registers rather than RAM, enabling device driver communication. Moreover, Physical Address Bus: The hardware pathway carrying physical addresses from the MMU to memory controllers, with width determining maximum addressable RAM on the platform.

MMU, Paging and Address Translation Deep Dive

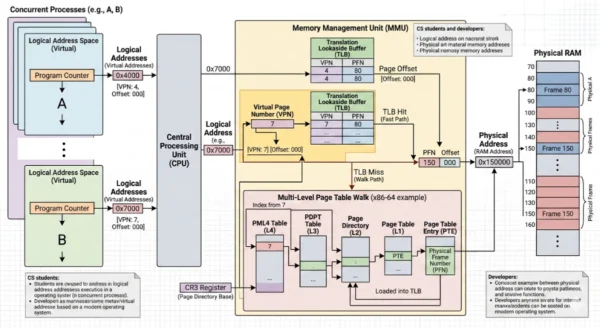

How the MMU Translates Addresses

- CPU generates logical address during instruction execution, splits into page number and offset

- MMU first checks the Translation Lookaside Buffer for a cached mapping of the page number

- On TLB hit, physical frame number is retrieved immediately with minimal latency penalty

- On TLB miss, MMU performs page table walk through multi-level page tables in memory

- Page table entry provides physical frame number and access permission flags

- MMU combines physical frame number with unchanged page offset to form complete physical address

- Page fault triggered if page table entry is invalid, OS then loads the page from disk to RAM

x86-64 Four-Level Paging Structure

- 48-bit virtual addresses divided across four page table levels plus a 12-bit page offset

- PML4 table indexed by bits 47-39, pointing to Page Directory Pointer Table entries

- PDPT indexed by bits 38-30, pointing to Page Directory entries for each 1GB region

- Page Directory indexed by bits 29-21, pointing to individual Page Tables for 2MB regions

- Page Table indexed by bits 20-12, providing physical frame number for the 4KB page

- Bits 11-0 form the page offset, passed unchanged through all translation levels

- Full walk requires four memory reads before reaching data, making TLB caching critical

Address Translation Step-by-Step Workflow

TLB Hit — Fast Path Translation

- Program instruction references logical address (e.g., 0x7fff5fbff6ac)

- MMU extracts page number from upper bits of logical address

- MMU checks TLB cache for existing virtual-to-physical mapping

- TLB hit found — physical frame number retrieved from cache immediately

- MMU combines physical frame number with page offset from logical address

- Complete physical address sent to memory controller for RAM access

- Data returned to CPU — total latency measured in single-digit nanoseconds

TLB Miss — Page Table Walk

- MMU checks TLB, finds no cached mapping for the requested page number

- MMU begins page table walk starting from CR3 register pointing to PML4 table

- Four sequential memory reads traverse PML4, PDPT, PD, and PT levels

- Page Table entry found with physical frame number and permission flags

- Permissions checked — segmentation fault raised if access violates declared permissions

- TLB updated with new mapping for future accesses to the same page

- Physical address constructed and memory access completed — latency 10-100x slower than TLB hit

Memory Translation Mechanisms Compared

| Translation Aspect | Paging | Segmentation |

|---|---|---|

| Division Unit | Fixed-size pages and frames, typically 4KB on modern systems | Variable-size segments corresponding to logical program divisions like code, data, stack |

| External Fragmentation | Eliminated — any free frame can hold any page regardless of size | Present — variable segment sizes leave gaps in physical memory that cannot be used |

| Internal Fragmentation | Present — final page of each allocation may be partially unused | Minimal — segments sized to match actual data requirements |

| Modern Usage | Dominant mechanism in all modern operating systems including Linux and Windows | Legacy on x86, largely superseded by paging for memory protection on modern platforms |

| Translation Cache | TLB caches recent page number to frame number mappings for fast repeated access | Segment registers cache base and limit values for active segments |

Use Cases and Real-World Applications

When Logical Addresses Matter Most

- Application development: All userspace programs work exclusively with logical addresses — pointers in C, references in Java, and memory allocations in every high-level language

- Virtual machine isolation: Hypervisors use nested paging to give each VM its own logical address space, isolating thousands of tenants on shared physical cloud hardware

- Memory-mapped files: OS maps file contents into logical address space allowing programs to access disk data through normal pointer operations without explicit I/O calls

- Shared libraries: Multiple processes map the same physical library pages into their own logical address spaces, reducing physical memory usage while maintaining isolation

- Address space layout randomization: Security mechanism randomizing where code, heap, and stack appear in logical address space to prevent exploitation of known addresses

When Physical Addresses Matter Most

- Device drivers: Kernel drivers use physical addresses to configure DMA transfers between hardware devices and RAM without CPU involvement

- Embedded systems: Microcontrollers without MMU hardware operate directly in physical address space, requiring developers to manage memory layout manually

- Bootloader development: System firmware operates in physical address space before the OS initializes virtual memory during the boot process

- Kernel memory allocators: OS kernel tracks physical frame availability and allocation through data structures keyed on physical addresses

- Hardware debugging: JTAG debuggers and hardware analyzers use physical addresses to inspect RAM contents during bring-up of new hardware platforms

Industry and Domain Application Patterns

| Domain | Logical Address Usage | Physical Address Usage |

|---|---|---|

| Cloud Computing | VM logical address spaces providing tenant isolation on shared hardware clusters | Hypervisor physical memory allocation across thousands of concurrent virtual machines |

| Embedded Systems | RTOS logical memory regions for task isolation in resource-constrained MCU environments | Direct physical addressing for memory-mapped peripheral registers in bare-metal firmware |

| Operating Systems | User process address spaces, shared library mapping, memory protection enforcement | Physical frame allocator, page table management, kernel heap and direct map regions |

| Game Development | Large virtual address spaces for streaming open-world game assets into logical memory | Console hardware physical memory layout optimization for GPU and CPU shared memory |

| Security Research | ASLR bypass research, logical address space inspection, heap spray analysis | Row-hammer attacks targeting physical DRAM cells, cold-boot memory acquisition forensics |

14 Critical Differences: Logical vs Physical Memory Addresses

Aspect | Logical Address | Physical Address |

|---|---|---|

| Generation | Generated by the CPU during program execution as instructions reference memory | Computed by the Memory Management Unit from the logical address and page table |

| Physical Existence | Does not physically exist in hardware — a virtual abstraction managed by software | Directly corresponds to a real, physical location in installed RAM hardware |

| User Visibility | Visible and accessible to user programs — all pointers and references are logical addresses | Hidden from user programs entirely — only the kernel and hardware interact with physical addresses |

| Uniqueness | Not globally unique — the same logical address value exists in every process address space | Globally unique across the entire system — each physical address maps to exactly one RAM location |

| Size Limit | Can exceed physical RAM — 64-bit systems support up to 256TB logical address space per process | Strictly bounded by installed physical RAM — cannot reference memory that does not exist |

| Contiguity | Appears contiguous to programs but may map to non-contiguous physical frames in RAM | Always represents a real, sequential location — physically contiguous within each frame |

| Stability | Remains constant for a program’s lifetime — the same logical address always refers to the same data | Can change as the OS relocates pages between RAM frames or swaps them to disk and back |

| OS Management | Managed by the operating system through page table creation, mapping, and protection enforcement | Not directly managed per-process — the OS manages physical frames as a shared system resource |

| Security Role | Enables process isolation and ASLR by giving each process its own isolated address space | Protected from direct user access — bypassing physical address protection enables serious exploits |

| Translation Required | Must be translated to physical address before every memory access via MMU and page tables | Used directly by memory hardware without further translation after MMU conversion |

| Address Binding | Bound at compile time, load time, or execution time depending on the linking model used | Assigned dynamically at runtime when the OS allocates physical frames to process pages |

| Virtual Memory | Foundation of virtual memory — allows processes to address more space than physically available | Limited to actual hardware — virtual memory extends the effective space beyond physical limits |

| Referenced By | All user-space software: application code, compilers, debuggers, and runtime environments | Hardware components: memory controllers, DMA engines, and kernel memory management subsystems |

| Debugging Context | Addresses shown in application debuggers, core dumps, and userspace memory profiling tools | Addresses used in kernel debuggers, hardware analyzers, and physical memory forensic tools |

Implementation and Code Examples

Understanding Logical Addresses in C

The following example demonstrates how C programs work exclusively with logical addresses, and how the same logical address value (the pointer) can exist in multiple processes simultaneously without conflict — because each process has its own independent logical address space:

#include <stdio.h>

#include <stdlib.h>

int main() {

int value = 42;

int *ptr = &value;

// ptr holds a LOGICAL address — not the actual RAM location

// Two different processes can both have a pointer with this value

// without conflict, because each lives in its own address space

printf("Logical (virtual) address: %p\n", (void*)ptr);

printf("Value at logical address: %d\n", *ptr);

// Dynamic allocation also returns logical addresses

int *heap_val = (int*)malloc(sizeof(int));

*heap_val = 100;

printf("Heap logical address: %p\n", (void*)heap_val);

printf("Value: %d\n", *heap_val);

// The MMU silently translates every access above to

// physical RAM addresses — invisible to this program

free(heap_val);

return 0;

}

// Example output:

// Logical (virtual) address: 0x7fff5fbff6ac

// Value at logical address: 42

// Heap logical address: 0x55a3c2f4a2b0

// Value: 100

// Note: physical RAM addresses for these locations are

// completely different and never visible to this program

Simulating Logical to Physical Address Translation

This simulation demonstrates how the MMU splits a logical address into page number and offset, then uses a page table to derive the physical address — the core of how every memory access works in a paged operating system:

#include <stdio.h>

#include <stdint.h>

#define PAGE_SIZE 256 // 256 bytes per page (simplified)

#define NUM_PAGES 16 // Process has 16 logical pages

#define PHYSICAL_FRAMES 8 // Only 8 physical frames available

// Simplified page table: maps logical page number to physical frame number

int page_table[NUM_PAGES] = {

3, 7, 1, 5, -1, 2, -1, 6, // Pages 0-7 (-1 = not loaded in RAM)

0, 4, -1, -1, -1, -1, -1, -1 // Pages 8-15

};

void translate_address(uint16_t logical_addr) {

// Step 1: Extract page number and offset from logical address

int page_number = logical_addr / PAGE_SIZE; // Upper bits

int page_offset = logical_addr % PAGE_SIZE; // Lower bits

printf("\n--- Address Translation ---\n");

printf("Logical Address: 0x%04X (%d)\n", logical_addr, logical_addr);

printf("Page Number: %d\n", page_number);

printf("Page Offset: %d\n", page_offset);

// Step 2: Look up physical frame in page table (MMU operation)

if (page_number >= NUM_PAGES) {

printf("ERROR: Segmentation fault — address out of bounds\n");

return;

}

int frame_number = page_table[page_number];

if (frame_number == -1) {

printf("PAGE FAULT: Page %d not in physical memory — OS loads from disk\n",

page_number);

return;

}

// Step 3: Compute physical address

// Physical address = (frame_number * PAGE_SIZE) + page_offset

uint16_t physical_addr = (frame_number * PAGE_SIZE) + page_offset;

printf("Physical Frame: %d\n", frame_number);

printf("Physical Address: 0x%04X (%d)\n", physical_addr, physical_addr);

}

int main() {

// Test various logical addresses

translate_address(100); // Page 0, offset 100 -> Frame 3

translate_address(300); // Page 1, offset 44 -> Frame 7

translate_address(1024); // Page 4, offset 0 -> Page fault

return 0;

}

// Output:

// --- Address Translation ---

// Logical Address: 0x0064 (100)

// Page Number: 0

// Page Offset: 100

// Physical Frame: 3

// Physical Address: 0x02C4 (868)

//

// --- Address Translation ---

// Logical Address: 0x012C (300)

// Page Number: 1

// Page Offset: 44

// Physical Frame: 7

// Physical Address: 0x07EC (2028)

//

// PAGE FAULT: Page 4 not in physical memory — OS loads from disk

Implementation Best Practices

Memory Management Best Practices

- Use paging over segmentation for modern OS design — fixed page sizes eliminate external fragmentation and simplify physical frame management

- Size working sets to fit within physical RAM to avoid thrashing — profile memory access patterns to understand actual working set requirements

- Leverage huge pages (2MB or 1GB) for large datasets to reduce TLB pressure and page table overhead in memory-intensive workloads

- Enable ASLR in production environments to randomize logical address layout and prevent exploitation of known address assumptions

- Use memory-mapped files to leverage the OS logical address layer for efficient file I/O without explicit buffer management

- Understand page alignment requirements when implementing custom allocators or working with DMA buffers in driver development

Common Pitfalls to Avoid

- Never assume a logical address is the same as a physical address — even in kernel space where the offset is constant, physical equals logical only in specific direct-map regions

- Avoid storing raw pointers in shared memory or files — logical addresses are per-process and meaningless to other processes or on program restart

- Do not allocate working sets larger than available physical RAM — virtual memory disk swapping can degrade performance by orders of magnitude

- Never attempt to access physical addresses directly from userspace — this bypasses all OS protections and will trigger immediate process termination

- Avoid excessive small allocations that fragment the heap and increase page table pressure — prefer pooled or arena allocation for performance-sensitive code

- Do not ignore page fault frequency in performance profiling — high fault rates indicate working set overflow and predict severe performance degradation

Performance, Security and Optimization

TLB Hit Rate Impact

High hit rate (>99%): Near-zero address translation overhead

Low hit rate: 10–100x slower memory access per miss

Page Fault Cost

Minor fault (page in RAM): Microseconds to resolve

Major fault (disk swap): Milliseconds — 1000x slower

Huge Page Benefit

Standard 4KB pages: Baseline TLB coverage

2MB huge pages: 512x more memory per TLB entry

Security Mechanisms Built on Address Separation

| Security Feature | How Logical/Physical Separation Enables It | Protection Provided |

|---|---|---|

| Process Isolation | Each process receives its own logical address space with no overlap | Prevents any process from reading or modifying another process’s memory |

| ASLR | OS randomizes where code, heap, and stack appear in logical address space | Defeats exploits relying on predictable memory layout for code injection |

| NX/XD Bit | Page table entries mark pages as non-executable at the physical mapping level | Prevents execution of data injected into heap or stack memory regions |

| Kernel/User Separation | Kernel pages mapped with privilege flags inaccessible from user logical space | Prevents userspace programs from reading or corrupting kernel memory structures |

| Guard Pages | Unmapped logical address regions placed around sensitive allocations | Triggers immediate page fault if buffer overflow extends beyond allocated bounds |

Performance Optimization Techniques

TLB Optimization Strategies

- Locality of reference: Access memory sequentially rather than randomly to maximize TLB hit rates through spatial locality exploitation

- Huge pages: Use 2MB or 1GB pages for large working sets — each TLB entry covers 512x more memory, dramatically reducing miss rates

- Working set sizing: Profile actual memory access patterns and size allocations to fit active data within TLB coverage

- NUMA awareness: On multi-socket systems, allocate memory on the same NUMA node as the executing CPU to minimize remote memory access latency

- Page coloring: Advanced technique distributing pages to minimize cache conflict misses in CPU set-associative cache hierarchies

Virtual Memory Optimization

- Memory-mapped I/O: Map files directly into logical address space to leverage OS page cache instead of duplicate read buffers

- Demand paging: Allow OS to load pages on first access rather than pre-loading entire address space, improving startup latency

- Copy-on-write: Fork processes sharing physical pages until modification, reducing actual physical memory consumption for spawned processes

- Transparent huge pages: Enable OS automatic promotion of frequently accessed page regions to huge pages without application modification

- madvise hints: Tell the OS your memory access patterns using madvise() so it can optimize prefetching and eviction decisions accordingly

Choosing the Right Memory Model for Your Context

Matching Memory Model to Development Context

Understanding when you are working with logical versus physical addresses is not just academic — it determines which tools you use, what assumptions are valid, and what failures are possible. Application developers exclusively live in the logical address world. Kernel and firmware engineers must understand both layers. The practical consequences of confusing the two range from inefficient code to catastrophic security vulnerabilities. Matching your mental model to the actual addressing layer of your development context is foundational to writing correct, efficient, and secure systems code.

Context-Based Decision Matrix

| Development Context | Address Layer You Work In | Key Considerations |

|---|---|---|

| Application Development | Logical addresses exclusively — all pointers are virtual | Memory safety, allocation efficiency, working set optimization, ASLR compatibility |

| OS Kernel Development | Both — kernel direct map maps logical to fixed physical offset | Physical frame management, page table construction, TLB flush correctness |

| Device Driver Development | Both — DMA uses physical addresses, driver code uses logical | Physical address acquisition via DMA APIs, cache coherency, IOMMU configuration |

| Embedded / Bare Metal | Physical addresses directly — no MMU in many MCUs | Manual memory layout, linker script configuration, peripheral register mapping |

| Hypervisor / VMM Development | Three layers — guest logical, guest physical, host physical | Nested page tables, EPT/NPT configuration, guest physical to host physical mapping |

| Security Research | Both — logical for exploitation, physical for hardware attacks | ASLR bypass techniques, Rowhammer physical targeting, memory forensics acquisition |

Key Design Patterns for Memory Management

Application-Level Memory Patterns

Developers working at the application layer should follow these patterns to work efficiently within the logical address model:

- Use standard allocators (malloc, new) and trust the OS to manage physical placement

- Favor sequential memory access patterns that exploit spatial locality and TLB efficiency

- Use memory-mapped files for large dataset access instead of read/write system calls

- Profile working set size to ensure active data fits in physical RAM before deployment

- Enable address sanitizers during development to catch logical address misuse early

Systems-Level Memory Patterns

Engineers working at or below the kernel layer must manage both address spaces explicitly:

- Use kernel APIs (virt_to_phys, phys_to_virt) rather than manual arithmetic for address conversion

- Acquire physically contiguous memory through appropriate kernel allocators for DMA operations

- Flush TLB entries explicitly after modifying page table entries that affect active mappings

- Use IOMMU for DMA on systems where physical address access from devices must be restricted

- Validate physical address ranges against memory map before establishing device mappings

Frequently Asked Questions: Logical vs Physical Memory Addresses

Understanding Memory Addresses in Modern Computing

The distinction between logical and physical memory addresses is not merely an academic concept — it is the architectural foundation that makes modern computing possible. Every program you use, every cloud service you access, and every container running in a Kubernetes cluster depends on this separation functioning correctly billions of times per second.

Key Logical Address Takeaways:

- Generated by CPU — every pointer and reference in your program is a logical address

- Per-process and isolated — same value in two processes refers to different physical memory

- Can exceed physical RAM — foundation of virtual memory and demand paging

- Security enforced at this layer — ASLR, page permissions, and process isolation

- Translated transparently by MMU — zero application code change required

- Essential knowledge for application developers, systems programmers, and CS students

Key Physical Address Takeaways:

- Real hardware location — corresponds to actual RAM cells in installed memory modules

- Globally unique — each physical address maps to exactly one byte across the entire system

- Bounded by installed RAM — cannot reference memory that does not physically exist

- Managed by kernel — only the OS allocates and tracks physical frame assignments

- Direct hardware access — required for DMA, device drivers, and bare-metal development

- Critical knowledge for kernel developers, embedded engineers, and hardware architects

Practical Recommendation for Developers in 2026:

If you are writing application code — web services, mobile apps, desktop software, or containerized microservices — you work exclusively with logical addresses and the OS handles everything else. Focus on working set efficiency, memory access locality, and avoiding leaks. If you are writing kernel modules, device drivers, embedded firmware, or hypervisor code, understanding both layers is non-negotiable — getting physical addressing wrong causes data corruption, security vulnerabilities, and hardware damage. For students learning operating systems, mastering logical-to-physical address translation through paging is the single concept that unlocks understanding of virtual memory, process isolation, memory protection, and modern OS architecture. Everything in systems programming builds on this foundation — invest the time to understand it deeply.

Whether you are approaching this topic as a student building your first OS, a developer debugging a memory corruption issue, or an architect designing memory-efficient distributed systems, the logical versus physical address distinction is one of those foundational concepts that rewards deep understanding with clearer thinking about every memory-related problem you encounter throughout your career.

Related Topics Worth Exploring

Paging vs Segmentation in OS

Explore the two primary mechanisms for implementing logical-to-physical address translation, comparing fixed-size page management against variable-size segment approaches in modern operating systems.

Virtual Memory Management

Understand how operating systems use demand paging, page replacement algorithms, and working set management to extend effective memory beyond physical RAM limits.

Kubernetes vs Docker Swarm

Discover how container orchestration platforms leverage OS memory management and logical address isolation to run thousands of isolated workloads on shared physical infrastructure.