The Kubernetes deployment decision has never carried higher operational stakes than in 2026. As AWS EKS vs self-managed Kubernetes becomes one of the most debated infrastructure choices for platform engineering teams, organizations must carefully weigh control plane ownership, operational overhead, AWS ecosystem integration, and total cost of ownership before committing to either path. Amazon EKS now commands approximately 42% of the managed Kubernetes market, with 79% of Kubernetes users globally opting for managed services over self-hosted clusters. Yet a significant segment of enterprises still choose to operate their own Kubernetes infrastructure, trading managed convenience for unrestricted customization and potentially lower long-term costs. Whether you are a developer building cloud-native applications, an SRE managing production clusters, or an IT leader architecting multi-year platform strategy, this comprehensive guide examines both deployment models through technical, operational, and financial perspectives to help you make the right decision for your organization.

Managed Kubernetes Landscape in 2026

Container orchestration has matured dramatically since Kubernetes first reached production readiness. In 2026, the question is no longer whether to use Kubernetes but rather how to deploy and operate it. The AWS EKS vs self-managed Kubernetes debate sits at the heart of platform engineering decisions, representing a fundamental trade-off between operational simplicity and infrastructure freedom that every growing organization eventually faces.

The container orchestration market itself reached $1.38 billion in 2026, growing at a 17.2% compound annual growth rate, reflecting the centrality of Kubernetes infrastructure to modern software delivery. AWS launched EKS in late 2017 and reached general availability in 2018, driven by customer demand for managed Kubernetes rather than organic AWS product strategy. That origin story matters because EKS was built to match upstream Kubernetes behavior rather than replace it, making it a faithful managed layer rather than a proprietary fork. Self-managed Kubernetes, by contrast, gives teams direct control over every component from the API server to etcd to the container runtime interface, enabling configurations that managed services cannot or will not support.

AWS EKS: Managed Control Plane Deep Dive

Definition

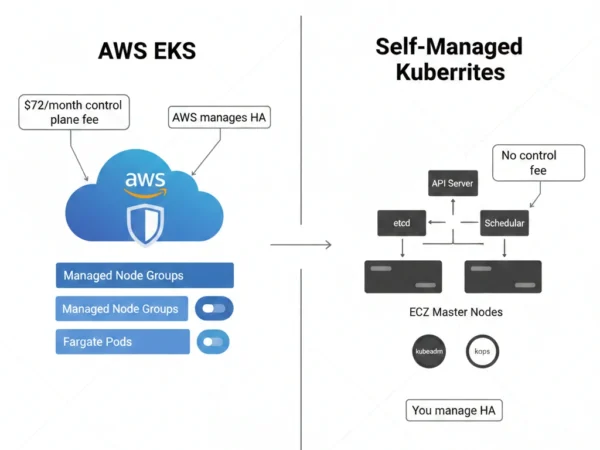

Amazon Elastic Kubernetes Service (EKS) is AWS’s fully managed Kubernetes service that abstracts control plane management from the operator, handling the Kubernetes API server, etcd distributed datastore, scheduler, and controller manager across multiple AWS Availability Zones. When you create an EKS cluster, AWS provisions a dedicated, highly available control plane that runs entirely within AWS infrastructure and is invisible to the customer. You retain full management of the data plane, including worker nodes, pod scheduling logic, networking configuration, and application deployments. EKS is certified Kubernetes-compatible, meaning any application running on upstream Kubernetes operates identically on EKS without code modifications, while also gaining native integration with IAM, VPC, ELB, CloudWatch, ECR, and dozens of other AWS services.

Advantages

- Zero control plane management: AWS handles etcd, API servers, upgrades, patching, and multi-AZ failover automatically

- Deep AWS integration: Native IAM roles for service accounts (IRSA), VPC CNI, ALB Ingress Controller, and CloudWatch Container Insights

- Multiple compute modes: Managed node groups, Fargate serverless, self-managed EC2, and EKS Auto Mode for complete flexibility

- EKS Anywhere: Extend the same EKS experience to on-premises and edge environments using your own hardware

- Karpenter integration: AWS-developed cluster autoscaler providing faster, more cost-efficient node provisioning than traditional cluster autoscaler

- Enterprise compliance: SOC, PCI, HIPAA, ISO, FedRAMP eligible, and extensive compliance documentation for regulated industries

Disadvantages

- Control plane fee: $0.10 per cluster per hour ($72/month) charged even when no workloads are running

- AWS vendor lock-in: Deep IAM, VPC, and service integration creates migration friction when moving to other cloud providers

- Version lag: New Kubernetes versions typically require 1-4 weeks for EKS certification and availability after upstream release

- Limited control plane visibility: Cannot SSH into master nodes, access etcd directly, or modify control plane configuration

- Networking complexity: VPC CNI assigns real VPC IPs to pods, consuming subnet address space rapidly at scale

- Hidden costs: NAT Gateway, data transfer, EBS volumes, and CloudWatch logs accumulate significantly beyond the base control plane fee

EKS Compute Options Explained:

Managed Node Groups: AWS provisions and manages EC2 Auto Scaling Groups, handles node lifecycle operations including updates and replacements with minimal configuration. Fargate: Each pod runs in an isolated serverless micro-VM with no nodes to manage, billing per vCPU and memory second consumed. Furthermore, Self-Managed Nodes: You provision and register EC2 instances directly, enabling custom AMIs, specialized instance configurations, and maximum flexibility. Additionally, EKS Auto Mode: AWS fully automates compute, storage, and networking provisioning using Karpenter under the hood, representing the most managed option available. Moreover, EKS Anywhere: Deploy EKS on-premises or on other clouds using AWS-supported Kubernetes distributions with centralized management.

Self-Managed Kubernetes: Full Control Model

Definition

Self-managed Kubernetes refers to deploying and operating the complete Kubernetes stack independently, including control plane components such as the API server, etcd, scheduler, and controller manager, on infrastructure you provision and maintain. On AWS, this typically means running Kubernetes master nodes on EC2 instances using tools like kubeadm, kops, or Kubespray, with full responsibility for cluster bootstrapping, certificate management, etcd backup and recovery, version upgrades, and security hardening. Self-managed Kubernetes provides unrestricted access to every configuration parameter, enables running any Kubernetes version including pre-release builds, and avoids the $0.10/hour per-cluster control plane fee that EKS charges. Therefore, organizations with mature platform engineering capabilities, specialized compliance requirements, or cost optimization mandates at very large scale find self-managed Kubernetes provides capabilities that no managed service can match.

Advantages

- Complete control: Configure every control plane parameter, admission webhook, API flag, and etcd setting without restriction

- No control plane fees: Eliminate the $72/month per-cluster fee, significant savings when operating dozens or hundreds of clusters

- Any Kubernetes version: Run latest upstream versions immediately without waiting for EKS certification, or pin to older versions beyond EKS support windows

- Cloud agnostic: Identical architecture deploys across AWS, Azure, GCP, on-premises, and bare metal without provider-specific abstractions

- Custom networking: Choose any CNI plugin including Cilium, Calico, Flannel, or Weave without VPC IP address consumption constraints

- etcd access: Direct etcd access enables custom backup strategies, disaster recovery testing, and audit capabilities impossible with managed services

Disadvantages

- Substantial operational burden: Control plane management, certificate rotation, etcd maintenance, and upgrade orchestration require dedicated expertise

- High availability complexity: Multi-master setup, etcd quorum management, and load balancer configuration require careful planning and ongoing maintenance

- Security responsibility: All control plane hardening, API server authentication, and etcd encryption falls entirely on your team

- Upgrade risk: Kubernetes upgrades across multi-node clusters require careful orchestration with potential for extended maintenance windows

- No AWS managed integrations: Must manually configure IAM integration, load balancer controllers, EBS CSI driver, and other AWS-specific components

- Incident responsibility: Control plane failures, etcd corruption, and API server outages require immediate team response with no AWS SLA backing

Self-Managed Kubernetes Deployment Tools:

kubeadm: Official Kubernetes bootstrapping tool that initializes control plane nodes and joins worker nodes with minimal configuration, suitable for most production deployments. kops: Kubernetes Operations tool specifically designed for cloud deployments including AWS, managing cluster lifecycle from creation through upgrades with opinionated defaults. Furthermore, Kubespray: Ansible-based deployment system enabling highly customizable Kubernetes installation across diverse infrastructure including bare metal and multiple cloud providers. Additionally, Rancher RKE2: SUSE-maintained Kubernetes distribution emphasizing security with SELinux support and FIPS compliance for regulated environments. Moreover, k0s: Zero-friction Kubernetes distribution packaging all components into a single binary, simplifying air-gapped and edge deployments.

Technical Architecture Comparison

AWS EKS Architecture

- Dedicated control plane per cluster spanning minimum three Availability Zones

- AWS-managed etcd database replicated across three AZs with automatic backup

- Minimum two API server instances running in separate AZs for high availability

- VPC CNI plugin assigning actual VPC IP addresses to every pod by default

- IAM Roles for Service Accounts (IRSA) enabling fine-grained pod-level AWS permissions

- Managed add-ons for CoreDNS, kube-proxy, VPC CNI, and EBS CSI driver

- EKS Auto Mode optionally extends management to node provisioning and lifecycle

Self-Managed Kubernetes Architecture

- Customer-provisioned master nodes on EC2, typically three for Raft quorum

- Self-managed etcd cluster requiring backup, restore, and compaction procedures

- Manual load balancer configuration for API server high availability

- CNI plugin of your choice: Calico, Cilium, Flannel, Weave, or Amazon VPC CNI

- OIDC or webhook authentication for AWS service integration requiring manual configuration

- All add-ons managed independently with version compatibility responsibility

- Complete cluster lifecycle management including bootstrapping, upgrades, and decommission

Control Plane Responsibility Model

What AWS Manages in EKS

- Kubernetes API server provisioning and scaling across AZs

- etcd cluster management, backup, and recovery

- Control plane security patching and OS updates

- Kubernetes version upgrades for the control plane

- Certificate authority management and rotation

- Control plane high availability and automatic failover

- Integration with AWS infrastructure for VPC, IAM, and networking

What You Always Manage

- Worker node provisioning, patching, and lifecycle management

- Application deployment configurations and workload management

- Kubernetes RBAC policies and namespace access controls

- Network policies and ingress controller configuration

- Persistent storage classes and volume management

- Monitoring, logging, and observability stack setup

- Worker node Kubernetes version upgrades and OS patching

Networking Architecture Differences

| Networking Aspect | AWS EKS | Self-Managed Kubernetes |

|---|---|---|

| Default CNI | Amazon VPC CNI assigning real VPC IPs to pods | Your choice: Calico, Cilium, Flannel, Weave, or any CNCF CNI |

| IP Address Usage | Each pod consumes a VPC IP, limiting subnet density | Overlay networks allow higher pod density with virtual IPs |

| Load Balancing | AWS Load Balancer Controller creating ALB and NLB natively | Manual ELB integration or software load balancers like MetalLB |

| Network Policies | Supported via VPC CNI network policy engine or Calico overlay | Full CNI choice enables advanced eBPF-based policies with Cilium |

| Service Mesh | AWS App Mesh native integration plus Istio, Linkerd support | Complete freedom to install any service mesh without restrictions |

Use Cases and Deployment Scenarios

When to Choose AWS EKS

- AWS-first organizations: Teams already invested in AWS services, IAM policies, and cloud infrastructure who want native Kubernetes integration

- Platform teams without SRE depth: Organizations lacking Kubernetes control plane expertise who need production-grade reliability without hiring specialists

- Rapid deployment requirements: Startups and scale-ups needing production Kubernetes within days rather than weeks of platform engineering effort

- Compliance-sensitive workloads: Industries requiring SOC2, HIPAA, PCI-DSS compliance with AWS-provided audit documentation and shared responsibility model

- Fargate workloads: Serverless container requirements where pod-level isolation and zero node management justify premium per-compute pricing

- Hybrid edge deployments: Organizations using EKS Anywhere to extend unified Kubernetes management to on-premises and remote locations

When to Choose Self-Managed Kubernetes

- Large-scale cluster fleets: Organizations running 50+ clusters where eliminating per-cluster control plane fees generates substantial monthly savings

- Multi-cloud architecture: Platform teams building cloud-agnostic infrastructure that must run identically across AWS, Azure, GCP, and on-premises

- Advanced networking requirements: Workloads needing eBPF-based networking, custom CNI configurations, or network topologies incompatible with VPC CNI limitations

- Air-gapped deployments: Government and defense workloads running in isolated networks where cloud provider APIs are unavailable

- Regulatory data residency: Organizations requiring complete control over where Kubernetes metadata and configuration data resides

- Mature platform engineering teams: Organizations with dedicated SRE teams capable of operating control plane infrastructure with appropriate on-call coverage

Industry Adoption Patterns

| Industry | AWS EKS Use Cases | Self-Managed Kubernetes Use Cases |

|---|---|---|

| Financial Services | Customer-facing banking APIs, fraud detection pipelines, AWS-native FinTech platforms | Air-gapped trading systems, multi-cloud risk platforms, proprietary compliance environments |

| Healthcare | HIPAA-eligible EHR integrations, telemedicine platforms, cloud-native diagnostics | On-premises medical imaging clusters, sovereign health data environments |

| E-commerce | Seasonal scaling workloads, microservices on AWS, Fargate-based event-driven processing | Multi-CDN architectures spanning cloud providers, custom caching and networking layers |

| Media & Streaming | Video transcoding pipelines on EC2 Spot, CDN integration via AWS services | Multi-region bare metal clusters for ultra-low latency delivery at massive scale |

| Government & Defense | AWS GovCloud EKS for FedRAMP workloads, cloud-connected mission systems | Classified air-gapped networks, sovereign infrastructure requirements |

12 Critical Differences: AWS EKS vs Self-Managed Kubernetes

Aspect | AWS EKS | Self-Managed Kubernetes |

|---|---|---|

| Control Plane Management | AWS manages API server, etcd, scheduler, and controller manager entirely | Team owns all control plane components, maintenance, and availability |

| Control Plane Cost | $0.10/hour per cluster ($72/month) regardless of workload size | No per-cluster fee; pay only for master node EC2 instances you provision |

| Setup Complexity | Create cluster in minutes via Console, CLI, Terraform, or eksctl | Hours to days of bootstrapping using kubeadm, kops, or Kubespray |

| Kubernetes Version Availability | Typically 1-4 weeks lag after upstream release for EKS certification | Immediate access to any upstream version including alpha and beta releases |

| AWS Service Integration | Native IAM, VPC, ALB, EBS CSI, CloudWatch, ECR integration out of the box | Manual configuration required for each AWS service integration |

| Networking Model | VPC CNI assigns real VPC IPs to pods, consuming subnet address space | Choose any CNI including overlay networks that do not consume VPC IPs |

| Upgrade Process | One-click control plane upgrades; worker node upgrades require manual action | Full upgrade orchestration responsibility across all nodes and components |

| High Availability | Multi-AZ control plane HA built-in with AWS SLA backing | Must architect and maintain multi-master HA with etcd quorum manually |

| Compliance Documentation | AWS provides audit reports, compliance attestations, and shared responsibility documentation | Team must produce all compliance evidence for control plane infrastructure independently |

| Cloud Portability | Deep AWS dependencies reduce portability to other cloud providers | Cloud-agnostic deployments enable identical clusters across any infrastructure |

| etcd Access | No direct etcd access; AWS manages backup and recovery entirely | Full etcd access enabling custom backup strategies and direct cluster state inspection |

| Serverless Option | AWS Fargate eliminates node management for suitable pod workloads | No equivalent serverless option; all workloads require managed node infrastructure |

Implementation and Migration Strategy

Getting Started: Platform Selection Framework

- Assess platform engineering maturity: First, evaluate whether your team has operated Kubernetes control planes in production, managed etcd failures, and executed multi-node cluster upgrades under time pressure.

- Quantify AWS commitment: Then, determine how deeply embedded your infrastructure is in AWS services, whether migration to another provider is realistic, and whether AWS lock-in is an acceptable business risk.

- Model control plane costs at scale: Additionally, calculate your projected cluster count over 24 months and multiply by $72/month to determine whether EKS control plane fees represent meaningful spend versus operational engineering costs.

- Evaluate compliance requirements: Furthermore, identify whether your industry requires specific data residency, air-gap, or compliance documentation that managed services cannot provide or that they satisfy more easily than self-managed.

- Analyze networking constraints: Subsequently, determine whether VPC IP address consumption under VPC CNI creates subnet density problems at your projected pod scale, and whether overlay networking would provide better economics.

- Consider operational cost honestly: Finally, factor the fully-loaded cost of dedicated platform engineers managing control plane infrastructure, on-call rotations, and incident response against the EKS control plane fee to determine true savings from self-management.

Deploying AWS EKS: Step-by-Step

Phase 1: Infrastructure Preparation

- Design VPC with sufficient subnet CIDR blocks for pod IP allocation

- Create IAM roles for the EKS cluster and node groups

- Configure security groups for control plane and worker node communication

- Install eksctl, kubectl, and AWS CLI with appropriate credentials

- Plan node group sizing based on workload resource requirements

Phase 2: Cluster Creation

- Create EKS cluster using eksctl create cluster or Terraform EKS module

- Configure managed node groups or Fargate profiles for your compute model

- Install AWS Load Balancer Controller for ALB and NLB provisioning

- Configure EBS CSI driver for persistent volume support

- Enable IRSA and create service account IAM role bindings for workloads

Phase 3: Operational Readiness

- Configure CloudWatch Container Insights for metrics and logging

- Install Karpenter for cost-efficient node autoscaling and right-sizing

- Implement GitOps workflow using ArgoCD or Flux for declarative deployments

- Establish Kubernetes version upgrade schedule aligned with EKS support windows

- Configure backup strategy for persistent volumes and cluster configuration

Deploying Self-Managed Kubernetes on AWS

Phase 1: Control Plane Setup

- Provision three master EC2 instances across separate Availability Zones

- Configure NLB or HAProxy for API server load balancing

- Initialize cluster with kubeadm or kops with production-grade configuration

- Verify etcd cluster health and configure automated backup to S3

- Harden API server with audit logging, encryption at rest, and admission webhooks

Phase 2: Node and Network Setup

- Join worker nodes to cluster and verify API server connectivity

- Install chosen CNI plugin and validate pod networking functionality

- Configure AWS cloud provider or cloud-controller-manager for EC2 integration

- Set up cluster autoscaler or Karpenter for node scaling automation

- Install external-dns for Route 53 integration and cert-manager for TLS

Phase 3: Day-2 Operations

- Establish runbooks for control plane failure scenarios and etcd recovery

- Configure monitoring for etcd health, API server latency, and controller lag

- Create upgrade pipeline testing new Kubernetes versions in staging first

- Implement certificate rotation automation before expiration windows

- Document disaster recovery procedures and test recovery from backup quarterly

Implementation Best Practices

Success Factors

- Use Terraform or CDK for all EKS cluster infrastructure to enable reproducible deployments

- Plan VPC CIDR blocks generously when using EKS VPC CNI to avoid IP exhaustion

- Implement Karpenter over cluster-autoscaler for superior cost efficiency and faster scaling

- Establish clear Kubernetes version upgrade cadence before clusters fall out of support

- Use managed node groups over self-managed nodes in EKS unless specific customization required

- For self-managed clusters, automate etcd backups to S3 every 30 minutes minimum

Common Pitfalls

- Never underestimate VPC IP exhaustion in EKS; plan subnet sizing before cluster creation

- Avoid running self-managed Kubernetes without automated etcd backup and tested restore procedures

- Don’t delay Kubernetes version upgrades; both EKS and self-managed clusters accumulate technical debt quickly

- Never run single-master self-managed Kubernetes in production; always use odd-number quorum of three or five

- Avoid mixing EKS managed node groups and self-managed nodes without clear operational procedures for each

- Don’t skip CloudTrail audit logging; API server audit logs are essential for security incident investigation

Cost and ROI Analysis

EKS Control Plane

Cost: $0.10/hour per cluster

Monthly: ~$72 per cluster

Annual (10 clusters): ~$8,640

Self-Managed Masters

3x m5.xlarge masters: ~$420/month

Annual (10 clusters): ~$50,400

Break-even: At massive scale only

Operational Labor Delta

EKS platform ops: ~0.25 FTE/cluster

Self-managed ops: ~0.5 FTE/cluster

Annual FTE cost delta: ~$80,000+

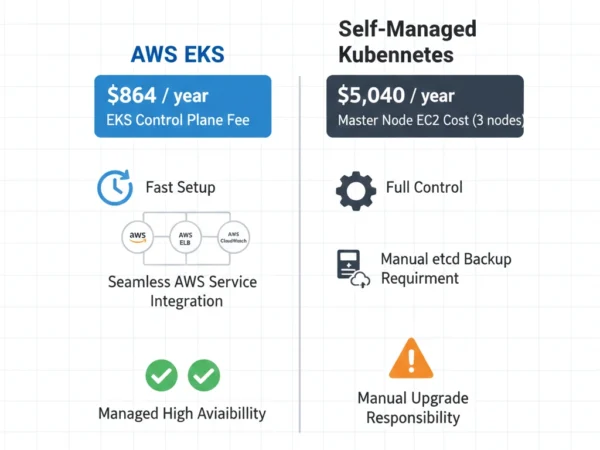

Total Cost of Ownership: 10-Node Production Cluster, First Year

| Cost Component | AWS EKS | Self-Managed Kubernetes on AWS |

|---|---|---|

| Control Plane | $864 (EKS cluster fee at $0.10/hr) | $5,040 (3x m5.xlarge master nodes) |

| Worker Node Infrastructure | $18,000 (same EC2 costs for both options) | $18,000 (same EC2 costs for both options) |

| Platform Engineering Labor | $40,000 (0.5 FTE at $80K fully loaded) | $80,000 (1 FTE at $80K fully loaded) |

| Training & Certification | $3,000 (EKS-specific training, existing K8s knowledge assumed) | $8,000 (CKA/CKS, kubeadm, etcd operations training) |

| Tooling & Monitoring | $6,000 (CloudWatch, Prometheus, Grafana) | $8,000 (additional etcd monitoring, backup tooling) |

| Incident Response Overhead | $2,000 (worker node issues only) | $12,000 (control plane incidents, etcd recovery drills) |

| Total First Year | $69,864 | $131,040 |

| Cost Difference | Baseline | +88% vs EKS for a single cluster |

The economics shift meaningfully as cluster count scales. Running 50+ clusters eliminates roughly $3,600 per cluster annually in EKS control plane fees, totaling $180,000+ in annual savings. However, self-managed Kubernetes operational labor scales with cluster count as well, and organizations rarely achieve the linear operational efficiency that makes the math compelling until they reach 30 or more clusters with a dedicated, experienced platform team. For the vast majority of organizations operating fewer than 20 clusters, EKS delivers lower total cost of ownership when engineering labor is factored in honestly. Reserved Instance and Savings Plan discounts apply equally to both deployment models for worker node compute, leaving the control plane fee and operational labor differential as the primary financial decision factors.

ROI Break-Even Analysis

EKS Becomes More Expensive When:

- Running 30+ clusters where control plane fees exceed operational labor savings

- Operating at scale where Reserved Instances on master nodes significantly reduce compute cost

- Using custom networking that VPC CNI cannot support, requiring architectural workarounds

- IP address exhaustion forces expensive VPC redesign to support pod density requirements

- Extended support fees apply when running Kubernetes versions past standard EKS support at $0.50/hour

Self-Managed Becomes More Expensive When:

- Control plane incidents require unplanned senior engineer time beyond budgeted hours

- etcd corruption events cause extended downtime with business revenue impact

- Manual upgrade processes consume disproportionate platform team capacity each quarter

- Compliance audits require extensive manual documentation of control plane security posture

- Recruiting and retaining experienced Kubernetes control plane operators commands salary premium

Strategic Decision Framework

Shared Responsibility and Strategic Fit

The AWS EKS vs self-managed Kubernetes decision is fundamentally about where your organization draws the line on infrastructure ownership. Just as choosing between Kubernetes and Docker Swarm depends on operational maturity and scale requirements, deciding between managed and self-managed Kubernetes on AWS reflects whether your engineering investment belongs in control plane operations or application delivery. Organizations achieve the best outcomes when they honestly assess their platform engineering depth, cluster scale economics, and compliance posture rather than defaulting to either the managed simplicity narrative or the control maximalist philosophy.

Decision Matrix

| Decision Factor | Choose AWS EKS When… | Choose Self-Managed When… |

|---|---|---|

| Platform Engineering Team | Team lacks control plane experience or has limited bandwidth | Dedicated SRE team with Kubernetes internals expertise and on-call capability |

| Cluster Count | Operating fewer than 20-30 clusters in production | Fleet of 30+ clusters where control plane fee savings become material |

| AWS Dependency | Organization is AWS-primary with no near-term multi-cloud plans | Multi-cloud strategy requires cloud-agnostic Kubernetes configurations |

| Networking Requirements | Standard VPC networking with acceptable pod IP density | High pod density, custom CNI, or networking incompatible with VPC CNI |

| Compliance Posture | AWS shared responsibility model satisfies compliance requirements | Air-gapped, data residency, or custom compliance documentation required |

| Kubernetes Version Requirements | Standard version cadence with EKS support window acceptable | Need immediate latest version access or must pin to unsupported versions |

| etcd Requirements | AWS-managed etcd backup and recovery sufficient | Direct etcd access needed for custom audit, backup, or compliance workflows |

| Serverless Requirements | Fargate serverless pods provide value for bursty or isolated workloads | All workloads suit node-based compute, Fargate overhead not justified |

Hybrid Approaches and Migration Patterns

EKS as Starting Point

Many organizations begin with EKS to accelerate initial Kubernetes adoption, then evaluate migration to self-managed as scale justifies the investment:

- Launch production workloads on EKS within days, learning Kubernetes operations without control plane burden

- Build platform engineering capability and Kubernetes expertise over 12-18 months

- Evaluate cluster count trajectory and operational maturity annually

- Migrate high-value clusters to self-managed when team capability and scale economics align

- Maintain EKS clusters for teams lacking self-managed expertise in a hybrid model

Self-Managed to EKS Migration

Organizations running self-managed Kubernetes on AWS frequently migrate to EKS when operational burden becomes unsustainable:

- Conduct inventory of self-managed cluster workloads and custom control plane configurations

- Identify configurations incompatible with EKS and plan migration approach

- Pilot EKS migration with non-critical cluster to validate AWS integration patterns

- Migrate workloads incrementally using blue-green cluster strategy

- Reclaim platform engineering capacity previously dedicated to control plane operations

Strategic Recommendation for 2026:

For the majority of organizations, AWS EKS delivers superior total cost of ownership when operational engineering labor is included in the analysis. Similar to how SD-WAN replaced manual network configuration by abstracting operational complexity, EKS abstracts control plane operations that deliver no competitive differentiation. Reserve self-managed Kubernetes for scenarios where your cluster fleet is large enough to generate meaningful fee savings, your team possesses genuine control plane expertise with appropriate on-call depth, compliance or networking requirements are truly incompatible with EKS constraints, or multi-cloud neutrality is a non-negotiable architectural requirement. Organizations that choose self-managed Kubernetes because of perceived cost savings without accounting for engineering labor, incident risk, and operational overhead consistently underestimate the true investment required to operate production-grade Kubernetes control planes reliably.

Frequently Asked Questions: AWS EKS vs Self-Managed Kubernetes

Making the Right Kubernetes Deployment Decision in 2026

The AWS EKS vs self-managed Kubernetes decision ultimately reduces to an honest assessment of what your organization’s engineering resources are best spent doing. For the 79% of Kubernetes users who have already chosen managed services, the answer is clear: AWS managing the control plane frees engineering capacity for application delivery, feature development, and workload optimization rather than infrastructure operations.

Choose AWS EKS When:

- Team lacks deep Kubernetes control plane expertise or on-call capacity

- AWS is your primary or sole cloud provider with deep service integration needs

- Operating fewer than 20-30 clusters where control plane fees are modest

- Compliance documentation and shared responsibility model satisfies requirements

- Fargate serverless pods provide value for your workload mix

- Speed to production and operational simplicity are primary priorities

Choose Self-Managed Kubernetes When:

- Operating 30+ clusters where per-cluster fee elimination generates real savings

- Multi-cloud or cloud-agnostic architecture is a non-negotiable requirement

- Dedicated platform SRE team with control plane expertise and 24/7 on-call

- Networking requirements are incompatible with VPC CNI constraints

- Air-gapped, sovereign, or compliance requirements preclude managed cloud services

- Need immediate access to latest Kubernetes versions or custom control plane configurations

Whether you are a developer, platform engineer, or IT leader, the container orchestration platform you operate shapes every aspect of how your organization delivers software. AWS EKS reduces infrastructure overhead and accelerates time-to-production for AWS-focused teams while self-managed Kubernetes rewards organizations with the platform depth, scale economics, or architectural requirements that justify the investment. Success comes not from following market trends but from matching deployment model to genuine organizational capability and business need, then executing whichever approach you choose with operational excellence.

Related Topics Worth Exploring

Kubernetes Cost Optimization

Discover strategies for reducing Kubernetes infrastructure costs through right-sizing, Spot instances, Karpenter, and resource quota enforcement across EKS and self-managed clusters.

GitOps with ArgoCD and Flux

Learn how declarative GitOps workflows improve deployment reliability, audit trails, and operational consistency for both EKS and self-managed Kubernetes environments.

Kubernetes Security Hardening

Explore RBAC design, pod security standards, network policies, runtime security tools, and supply chain security practices essential for production Kubernetes deployments.